GLASS; Google Glass Transformation Design

TL; DR

Redesigned Google Glass for blind people utilizing Google Maps with accessible features and Google talkback to navigate so that visually sighted people will recognize obstacle and distances easier

User Challenge

People with low vision encounter several obstacles in cities because of the lack of infrastructures in accessibility scope and no accurate features for navigation.

I aim to address navigation issues for blind people in real life and started the research based on the main research question.

Duration

3 months

Tools

Google XD (App Prototyping)

Blender and 3D Printer (Glass Prototyping)

iPhone's LiDAR Camera (3D Scanning)

ECHOES Application

My Role & Responsibilities

Gathering secondary research

Hand sketch and frame designs

Hi-Fi prototypes for the Google Maps

Interaction design

Accessibility design

Team

Solo project, 1 Product Designer

Supervisor

BACKGROUND

How Might We help blind and partially sighted people navigate to their destination and do their daily routines?

Project Goal

This project aims to execute a design concept for blind people to have a better experience in navigation through the TMU campus. The research question in my thesis project is "How can technologies related to Google Maps, Google Glass, location-based AR, and LiDAR be utilized to provide a more efficient method of navigating for blind people?"

Pain Points

Due to the lack of available target users to interview, I conducted several secondary research to find out blind people's problems while they are walking on the way in the city or doing their routine in public areas. The result was gathered as the pain point below;

Temporary Obstacles in city

#1

Less Accessible Navigation Tools

#4

Hard To Find The Destination

#2

No GPS Available in Indoor area

#5

Less Accessible Infrastructure

#3

No Inclusive Digital Assistant

#6

EXECUTION SUMMARY

This project is done by developing a prototype and devices such as Google Maps & Google Glass with the highest efficiency for blind and partially sighted people to use in everyday life.

First, Google Glass is redesigned by adding LiDAR technology, face and object recognition, a microphone, and bone conduction headphones to connect with Google talkback and live assistant. Also, Location-based AR, and Google talkback with different interfaces were added to Google Maps to make it more accessible for blind and partially sighted people.

Google Glass

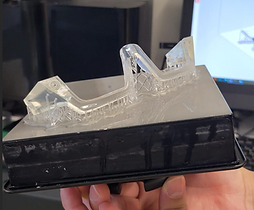

Here is the final mockup of high-fidelity 3d printed Glass with the case as the charger and hardware storage.

in the following, you can find the iteration process of achieving this prototype

Google Maps

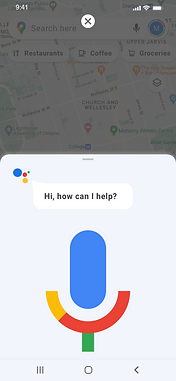

After the Glass, next was Google Maps' turn! So I had to provide a platform to connect to Glass. Current Google Maps can interact with users and get commands through Google Talkback to find the destination and direction for the user. But I have added a feature in Google Maps to first sync to the Glass, second, have specific accessibility considerations in colors and shapes for users with low visions to interact with the app. In this prototype, a simulation application popup and the conversation between the user and the Assistant is built.

(Sound On )

(Before) Current Google Maps

(After) Redesigned Google Maps

METHODOLOGY

Brainstorm, Plan, and Define

#1

-Brainstorm and Narrowing down the rough idea

- Secondary research to find problem and pain points

- Plan for the steps to execute the final artifact

Ideate and Design

#2

- Research to define the Persona

- Glass Sketch and lo-fi prototype

- Iterative ideating for Glass

- Google Maps lo-fi prototype

Build

#3

- Glass with High fidelity 3d print

- Hi-fi Google Maps prototype

- LiDAR 3d scanning mockup

Defining a Persona

Based on the secondary research and data gathered, Sophia was defined as the target persona.

Problem statements

Sophia is an elementary student who has been blind since birth. She likes to learn and study in schools with her sighted friends to help her communicate more and navigate without her parent's assistance because she loves to be more independent while she is going out and experiencing social life.

“I like to have more quality time with my sighted friends, but they think Im always dependent to my parents, so they hesitate to ask me to join them. Because my parents always have concerns that I may get lost when I am going out alone, so I am forced to have them next to me when I'm with friends ”

Brainstorming

In our class sessions, while working on our MRP (final project), we started ideating our rough ideas to narrow them down. In the beginning, I had a vague concept of how I can accomplish the navigation solution for blind people, So I made several mind maps, and crazy eight techniques with random cards to make the project clear to continue. This is how I came up with redesigning Google products.

Ideation

After all process of ideating to narrow down the project with the aim of finding a concept for blind people in navigation, I decided to redesign Google Glass to make it usable for blind people and use other Google Products' APIs to compile and work together as the Internet of Things.

Google Glass

After the research done on the technologies and tools to execute my idea, I started sketching the frame, considering the electronic chips and features I had to add to the Glass. To make the Glass more prevalent, that can be used in routine life; I had to transfer some of the hardware to another device; since the user has this chance to use their own phone with this Glass, I added some hardware tools in the Glass Case, so the user can carry that while he is using the Glass.

Then the iterated process continues by 3d printing the model printers for lo-fi qualities that takes less time to 3d print. After making changes in the models and 3d printing, the final hi-fi prototype was printed with the SLA 3d printer.

Ideation sketching for frame design

Iterative Process to get the best frame design

Low fidelity 3d printing (FDM 3d printers)

3D Modeling

High fidelity 3d printing as the final mockup (SLA 3d printers)

TECHNOLOGIES

LiDAR System

LiDAR (Light Detection and Ranging), proposed for obstacle detection for blind and partially sighted people, detects the obstacle orientation and distance from the device holder. I aim to have this in my product to find out the benefits and drawbacks of this system. one of the advantages of LiDAR is working efficiently in dark environments, which is initial for this Glass.

As a simulation of how a LiDAR Camera works, I record the path with LiDAR scanner and a normal camera to see the differences. Then I put sound as a live assistant. Part of the video captured with a live assistant on it is added to the prototype above.

So this mockup will show how it feel to use the Glass as a blind person.

LiDAR Scanner

Normal Camera

Location-Based AR

Augmented Reality with a Location Based system works with GPS and GIS, as an assistive tool for blind people's navigation, and they are integrated with a voice guide interface to alert them about their location and destinations.

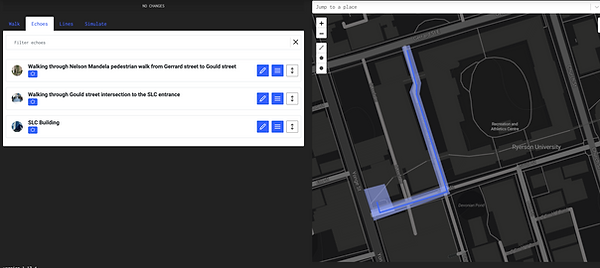

ECHOES is a platform using Location-Based AR mostly for tours. But I have gotten Inspired by it as a stopgap solution on my prototype in Google Maps. Also, I have added my project to this platform to simulate the feeling of using Google Glass for blind people, which is needed to download the app and be present in the location (TMU Campus as the case study).

ECHOES Website for Creators

ECHOES App for Explorer

Face & Object Recognition

Another feature that is a must for this kind of assistant is face and sign recognition, using edge detection techniques to recognize signs and faces. This feature is connected to Google Vision API for object detection and can detect familiar faces through Google Photo of the user

Google Glass Details

Finally, the concept was proposed as below by implementing all technologies in this prototype.

The essential challenge that I had and it is not been solved was battery life. To make it more efficient in battery life and better quality of electronic chips in a bigger space, besides keeping the Glass frame as a prevalent design, some of the tools would be transferred to the Glass Case.

TAKEAWAYS

After all the process in this final project, having so many challenges that in interviewing with the blind community, I understood that any accessible device should first be accepted by the target users community to have this as an assistant.

Also, my aim was to use the latest technology trends to determine their pros and cons, and the result was that these trends could be a failure or success of the final product. As an example, the LiDAR system works well in a dark environment for obstacle detection, but it cannot detect glass and also level changes, which is an initial issue in indoor navigation. Moreover, most scientists are still struggling with improving the same products' battery life.

Challenges

The most important challenge I had in this process was that I was unable to contact the blind community, since this type of projects was mentioned as Disability Dongle, that would not be useful for them.

Also, as mentioned, choosing the usable technology that can work better in this concept was one of the challenges that I decided to go with the latest trends to find out their advantages and disadvantages.

Future Works

This Project aims to be more inclusive that can be used in different stages. For instance, in the future, this Glass will be used as a translator for tourists or anyone in a society with a different language.

Moreover, I would work on the hardware and software of the Glass product.

Also, I will interview this concept with the blind community to find out their pain points and improve the concept.